Posting Terbaru

Keamanan Data di Pragmatic77: Apa yang Perlu Anda Ketahui

Pragmatic77, perusahaan teknologi terkemuka yang berbasis di Indonesia, telah menjadi pusat perhatian dalam industri digital dengan inovasi terbaru mereka. Namun, di balik kemajuan teknologinya, keamanan data tetap menjadi prioritas utama.…

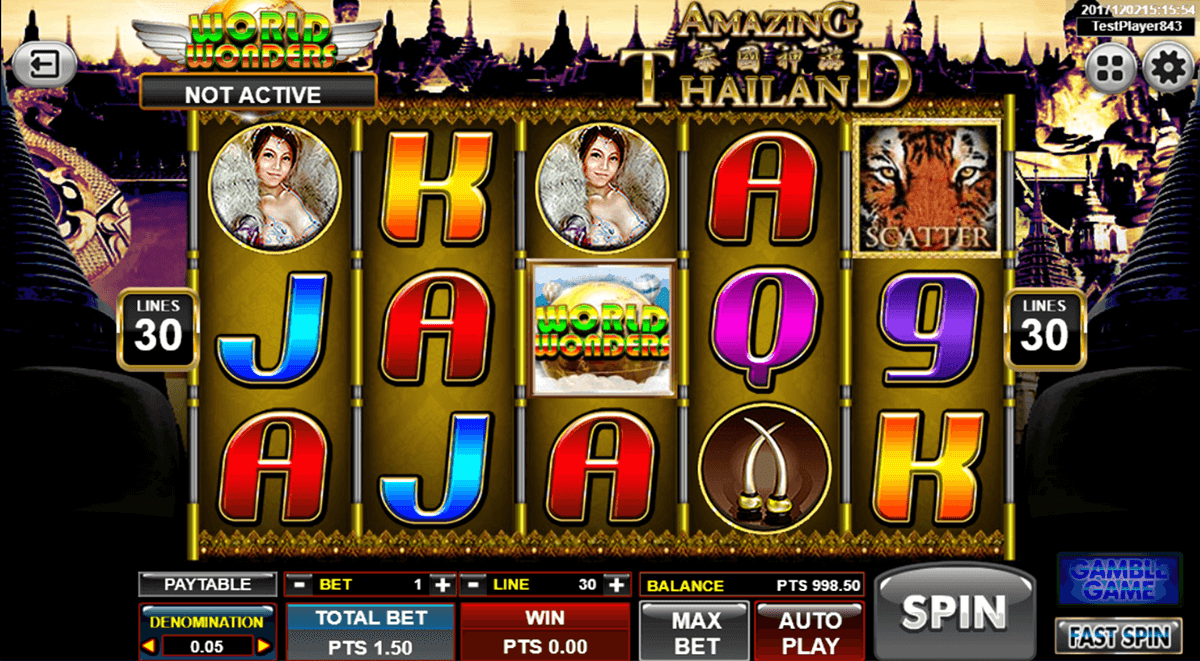

Apa Saja Jenis Permainan yang Ditawarkan oleh Pragmatic77?

Pragmatic77 telah menjadi sorotan dalam industri perjudian daring, menarik minat para pemain dengan beragam jenis permainan yang ditawarkan. Dari slot yang menghibur hingga permainan meja yang menantang, platform ini menawarkan…

Panduan Lengkap Cara Withdraw di Pragmatic77

Pengalaman bermain game online semakin menjadi pilihan utama bagi banyak orang di era digital ini. Bukan hanya sebagai hiburan semata, tetapi juga sebagai peluang untuk mendapatkan penghasilan tambahan. Salah satu…

Misteri Slot Galaxy77: Mengungkap Fakta Menarik Dibalik Layar

Dalam dunia yang serba digital ini, slot online telah berkembang menjadi lebih dari sekadar permainan. Mereka telah menjadi sebuah fenomena, membawa pemain ke dalam dunia virtual yang penuh dengan janji…

Strategi Untuk Menang Bermain Slot di Dolar 88

Di era digital saat ini, bermain slot online telah menjadi salah satu kegiatan yang sangat populer di kalangan pecinta judi online. Dolar 88, sebagai salah satu situs judi online terpercaya,…